Element Design System

152% Gross ROI | $271K Net Annual Savings | 34 Teams Adopted

Overview

I led an enterprise design system program across 75+ applications and 48 brands, without an adoption mandate, and with two defundings. The system scaled to 34 active teams, reduced WCAG violations by 73%, and produced $271K net annual savings on a $519K annual investment through measured time deltas and audits.

Johnson Controls operated 75+ applications across 48 brands with no shared design infrastructure. Fragmentation was not a style problem, it was an execution tax that slowed releases, increased compliance risk, and made multi-product sales demos incoherent.

- Scope: 75+ applications, 48 brands

- Constraints: No mandate, no authority, legacy Angular, two defundings

- Key decisions: Pilot-first rollout, Adobe XD to Figma migration, token architecture, micro-adoption tiers, recurring alignment model

- Results: 34 teams, 73% fewer WCAG violations, $271K net savings, 7.9-month payback

The Problem

Johnson Controls operated 75+ applications across 48 brands with no shared design infrastructure. Every product team rebuilt the same components independently, slowing time-to-market, inflating costs, and making it impossible for sales teams to present a coherent multi-product story.

- 78% of surveyed designers and developers reported difficulty maintaining UI consistency

- 3 to 5 hours per week lost per designer recreating existing components and resolving style conflicts

- 1,000+ icons in active use with no naming conventions, and no path to shared infrastructure

My assigned problem: Build a design system from nothing, with no guaranteed funding, no precedent, and no formal authority over the 34 teams who would need to adopt it.

System at a Glance

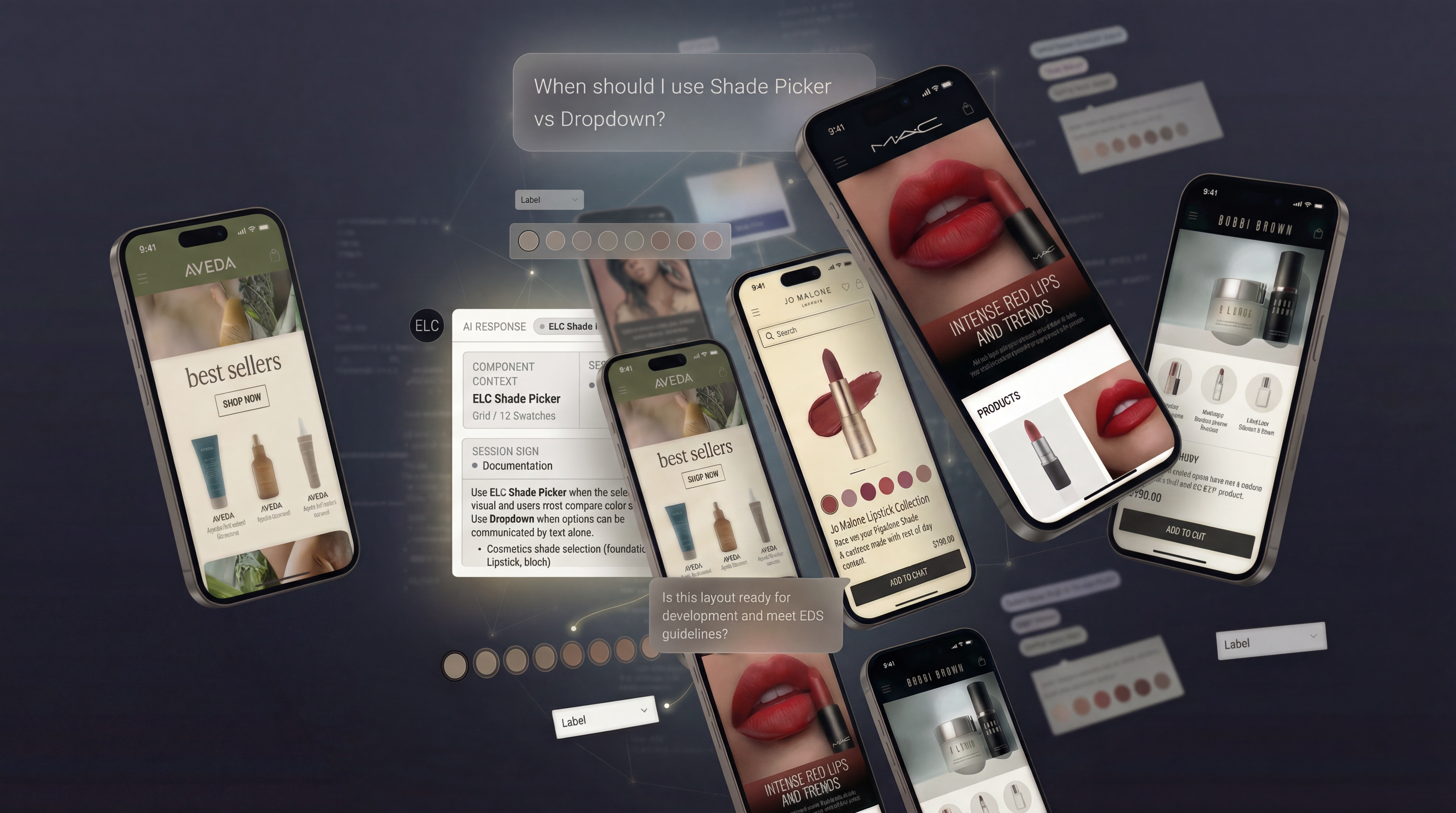

I designed EDS to solve three compounding failures: product-level fragmentation, recurring design-to-dev handoff breakdowns, and the absence of shared infrastructure to prevent either from recurring.

Three artifacts defined the system, scope, governance, and architecture, and each one did real organizational work.

My Role & Team

- My Role

- UX/UI Designer II (2018–2022) → Senior UX/UI Designer, Design System Lead (2022–2025)

- Year

- 2018–2025

- Company

- Johnson Controls (JCI)

- Product

- Element Design System (EDS) v1.4.0

- Team

- Peak: 3 UX Designers (Ben Hapka, Kateryna Turchyna, Benjamin Shimazu) + 1 Senior Front-End Developer (Brian Kingsbury). Started as a 2-person team. I was the only constant across all seven years.

- Platform(s)

- Web applications (B2B SaaS)

- Project Status

- Sunset 2025 due to OpenBlue profitability reorganization. At defunding: 34 active teams, 8.7/10 satisfaction, $271K verified savings, and a backlog of approved features teams were waiting on.

Decisions I owned:

- Roadmap and release strategy (v1.0 through v1.4)

- Token architecture and multi-brand theming model

- Governance model: contribution, versioning, and deprecation lifecycle

- Figma library architecture across three UI Kits

- Adoption framework, pilot team selection, and enablement

- Standards: design language, interaction patterns, typography, and accessibility acceptance criteria

What I influenced but did not own: Front-end implementation decisions (owned by Brian Kingsbury). Sprint-level engineering prioritization on adopting teams. Budget and headcount decisions (owned by director-level leadership).

What was decided above me: Both defunding decisions. The denial of a second front-end engineer in 2024. The OpenBlue reorganization that ended the program.

Four Decisions That Defined the Program

Iteration Timeline (2018–2025)

- 2018–2019: Grassroots start, initial inventory and early library attempts

- 2020: Program restarted under new leadership, migration to Figma approved, foundations rebuilt for scale

- Q2 2022: v1.0 launched, production scale began

- 2023: NPM package shipped (Q3 2023), code adoption started

- 2024: Adoption plateau diagnosed, micro-adoption tiers introduced, alignment model strengthened

- 2025: Program sunset due to profitability reorganization, governance and intake artifacts left in place

1. Pilot-first, not big-bang rollout

The first real test was a deliberate two-team pilot: MyJCI first (led by Senior Designer embed on product team), then OpenBlue Enterprise Manager with Design Operations Lead. MyJCI was a greenfield product, no legacy code, no refactoring risk, which made it a viable first partner for a system that was still in alpha.

The context. The system was mid-migration from Adobe XD to Figma, not yet stable, not yet v1.0. The pilot wasn't a showcase of a finished product; it was a structured alpha test designed to find what was broken before scaling to everyone.

The initial rejection. The MyJCI engineering lead's first answer was no. The team was mid-sprint on a compliance deadline with no capacity for anything outside core work.

The reframe. I narrowed the scope and repositioned the ask: instead of "adopt our system," the pitch became "help us test and stabilize it before we release it to everyone." That framing removed the risk perception. They weren't being asked to depend on an unfinished system. They were being asked to help shape it.

The result. They said yes. That alpha pilot surfaced 10 component enhancements, gaps in training materials, and workflow issues that wouldn't have been caught until v1.0 was already in production. The feedback directly shaped what shipped. Both pilot teams became the internal references that de-risked every adoption conversation that followed.

2. Migrate to Figma and build the token architecture before scaling

2a. Platform: Migrate to Figma

When new executive leadership created an opening in 2020, I pushed to migrate from Adobe XD to Figma before growing the library. Figma's component management, multi-editor collaboration, and library sharing would make everything downstream faster and more maintainable. The cost: 6 weeks of rebuild time. At 34 teams, maintaining Adobe XD libraries would have required an estimated 8–10 hours/week in manual sync work per library update. Figma eliminated that entire cost category.

2b. Architecture: Three-tier token hierarchy

The core problem: how do you build one component library for 48 brands without maintaining 48 versions of every component? I designed a three-tier token hierarchy to solve it. Before building, I worked with the front-end team to align Figma Variable naming with EDS token conventions already in use by development. Naming mismatches would have broken the design-to-code handoff at scale.

Tier 1 (raw values): Hex codes, pixel values, font stacks with no semantic meaning. Tier 2 (semantic tokens): Purpose-driven names like "color-action-primary" that mapped to raw values per brand. Tier 3 (component tokens): Component-specific bindings that referenced semantic tokens but could be overridden per brand when needed.

The tradeoff was complexity. In v1.0, overlapping semantic tokens caused inconsistent usage. The v1.1 audit consolidated 14 redundant tokens and added a governance rule: every new token required a usage description and at least two component references before being added. The result: brand-switching through Figma Variables without duplicating the library.

3. Redesign the adoption model, not just the components

By 2024, the adoption data revealed a specific problem. The 7.1/10 adoption excitement score (anonymous survey, 34 respondents across 12 teams) told me something mid-program satisfaction scores (8.5/10) couldn't: teams liked the system but were ambivalent about adopting it. The barrier was entry cost, not quality. Building more components would not solve an adoption model problem.

Core products ran on legacy Angular 8 code with custom UI components. Asking teams to adopt EDS was effectively asking engineering leads to approve a legacy code refactor mid-sprint. I designed a three-level on-ramp where each level had a defined entry bar, scoped sprint commitment, and demonstrable ROI teams could present to engineering leads before committing further.

| Level | Entry Point | Sprint Investment | Demonstrable ROI |

|---|---|---|---|

| Foundations | Apply EDS color, typography, and spacing tokens | 1–2 days. No component refactor required. | Brand consistency across UI without touching legacy component code |

| Component Integration | Replace 1–3 highest-usage Figma components with EDS equivalents | 3–5 days per component set | Measurable design velocity gain in the next sprint |

| Full Ecosystem | NPM package + Developer Hand-off Template + full governance integration | Full sprint commitment, including legacy code updates | Full savings trajectory: team enters the $790K gross savings model |

All 5 development teams that integrated EDS in 18 months entered through this framework. Engineering leads could approve Level 1 without a sprint commitment. Full adoption became a logical next step after two successful tiers, not a prerequisite for getting started.

4. Install a cross-functional alignment model

The adoption plateau in late 2023 surfaced a tension between the EDS roadmap and product team priorities. Two product managers pushed back on the Q1 2024 component release schedule, arguing their teams couldn't absorb new components while shipping compliance features.

Rather than escalate, I proposed a joint prioritization session where PMs ranked which EDS components would most reduce their teams' sprint burden. That session resequenced the Q1 roadmap: we deprioritized three components the EDS team wanted to ship and accelerated two that PMs identified as highest-impact for compliance workflows.

The jointly prioritized components saw significantly faster adoption, in some cases within a single sprint, compared to the 2–3 sprint lag for components the EDS team sequenced alone. Actual timelines varied depending on component complexity and where each team was in their legacy Angular migration. The consistent pattern was ownership: when PMs chose the components, their teams prioritized the work rather than deferring it.

Quarterly alignment became a lightweight operating system: a shared intake template, explicit decision rights, and a sequenced roadmap tied to adoption impact and compliance risk. For the two jointly prioritized compliance components in Q1 2024, the adopting teams implemented within one sprint, versus the typical 2–3 sprint lag we saw when the EDS team sequenced components independently. Timelines varied by component complexity and each team's stage in legacy Angular migration. When the program was defunded, product teams could keep using the same mechanism without the core EDS team.

Impact: 2022–2024

All metrics reflect the two years after v1.0 launched and reached production scale.

- Baseline window: Spring 2023

- Follow-up window: Spring to Summer 2024

- Population: Designers and developers actively recreating or maintaining UI components and tokens during the measurement windows

- Method: Paired internal time surveys, plus quarterly UI consistency and accessibility audits on a fixed sampled screen set

- Bias controls used: Same survey instrument across both windows, consistent sampled screen set for audits, and results reported as deltas (not absolutes)

The program ran as an unfunded grassroots effort from 2018–2021 before receiving sustained investment.

Business Outcomes

Measured (observed deltas)

- Iteration cycle time: 8.5 days → 4.5 days (47% reduction)

- Design consistency audit: 56 → 87 / 100

- Accessibility violations (WCAG 2.1 AA): 127 → 34

- Design-dev clarification requests: 27% reduction

Modeled (assumptions used for dollar impact)

- Population: 21 designers, 5 developers

- Wage rates: $44.71/hr (design), $47.60/hr (dev)

- Working days: 240

- Baseline vs follow-up windows: Spring 2023 vs Spring to Summer 2024

Triangulation (non-survey cross-check)

- Enterprise sales enablement: The unified interface supported client-facing demonstrations. Buyers expected a cohesive multi-product story, EDS made that story possible across 75+ applications.

- 152% gross ROI with a 7.9-month payback period. $790K+ gross savings from eliminated component recreation time against $519K annual team investment (3.5 FTEs), yielding $271K net savings.

- Note on ROI: This is a directional model to estimate opportunity cost, not a finance-certified ROI.

Methodology: time surveys measured repetitive UI infrastructure work, which includes time spent recreating existing components, maintaining variants, resolving token questions, and clarifying handoff due to missing standards. Baseline: 5.2 hrs/day (Spring 2023). Follow-up: 2.4 hrs/day (Spring 2024). Population: 21 designers at $44.71/hr and 5 developers at $47.60/hr over 240 working days. Survey prompt (summarized): "In a typical day, how many hours do you spend on repetitive UI work that should be reusable?" Triangulation: direction and magnitude cross-checked against quarterly consistency and accessibility audit deltas, plus reduction in design-dev clarification requests. Results reviewed for reasonableness with team leads.

Production Footprint

- Code adoption: 5 dev teams integrated EDS via NPM within 18 months (Q3 2023 to Q1 2025)

- Design adoption: 34 teams used EDS-spec'd designs in standard handoff workflows

- Constraint: Legacy Angular 8 stacks required ~12 sprints of refactoring per product for full NPM integration

- What we did instead: Micro-adoption let teams start with tokens and specs immediately while engineering planned migration on their own timeline

The result, in the same visual language used to open the problem statement:

Team Efficiency and Quality

Metrics are drawn from two paired internal surveys: a Spring 2023 baseline and a Summer 2024 measurement, covering 21 designers and 5 developers actively recreating components. Time savings were calculated by comparing average minutes spent on component creation, maintenance, and token lookup across both periods. Design consistency scores come from quarterly audits run across the same sampled screen set each cycle. Accessibility and icon recognition data are from structured screen sampling and cognitive load testing conducted as part of the v1.1 audit cycle.

- 47% reduction in design iteration cycle time (8.5 days to 4.5 days)

- 50% faster designer onboarding (4–6 weeks to 2–3 weeks to full productivity)

- 27% fewer design-dev clarification requests after Developer Hand-off Template launch

- Design consistency: 56 to 87/100 (+55% across quarterly audits)

- 73% reduction in WCAG 2.1 AA violations (127 to 34 across sampled screens)

- 89% icon recognition rate (vs. 62% pre-EDS, after standardizing from 1,000+ to 600 icons based on cognitive load testing)

What the Defunding Means

EDS was defunded twice due to leadership and org profitability shifts.

- 2019: Platform investments were deprioritized in favor of product delivery.

- 2025: OpenBlue reorganized around profitability and eliminated multiple cross-cutting programs.

What I took from it: without institutionalized sponsorship and clear business measurement, design system work is treated as discretionary. That is why the ROI and savings methodology became a core deliverable.

At sunset: 34 active teams, 8.7/10 satisfaction in the final retrospective, and a backlog of approved features. The governance lifecycle and contribution model were documented so teams could continue basic operations without the original core team.

Durability proof point: After defunding, product teams continued using the same artifacts to run shared component decisions without the original EDS team: (1) the structured intake template (required fields), (2) the prioritization and sequencing rules tied to adoption impact and compliance risk, and (3) the documented decision-rights model between EDS and engineering leads.

Reflection

What I'd Do Differently (Summary)

- Start with a cost audit, not a component inventory, quantify fragmentation before building

- Staff a front-end engineer as a co-founder, build code in parallel with design from day one

- Track excitement, not just satisfaction, adoption intent is a leading indicator

1. Without embedded executive sponsorship, a design system is one leadership change away from shutdown. Two defunding events proved this as structural truth. That lesson reshaped how I built the business case from 2020 onward. The $271K net savings number exists because I insisted on tracking it from the start, knowing the day would come when I'd need it. Every proposal after 2020 was framed in business outcomes, not design quality.

What I'd change: Start with a cost audit, not a component inventory. Quantify what fragmentation costs before writing a single spec. Without a business case, a design system is always discretionary spend.

2. A front-end engineer should be a co-founder, not a later hire. Design validation is cheaper than code, so shipping Figma components first gave us a tested, stable set to build the NPM package against. But the timeline makes the cost visible: our NPM package launched Q3 2023, nearly three years after funding restarted. If a front-end engineer had joined in 2020 and built code in parallel with design, we would have reached production teams years earlier. This is now a non-negotiable ask in any design system staffing conversation.

3. Measure excitement, not just satisfaction. The 7.1/10 adoption excitement score was the most useful data point in the entire project. It told me something mid-program satisfaction scores (8.5/10) couldn't: teams were happy with the system but ambivalent about adopting it. That distinction, "likes it" versus "will use it," required a different solution: the micro-adoption framework, not more components. I now track adoption excitement alongside adoption rate from day one.